Recently, we faced a huge performance problem with one of our installed apps. The application is a Call Center ERP solution. The Call model is updated very frequently as there are about approximately 8,000 – 10,000 calls being made everyday. For every action there is an audit trail, so updates and inserts into database are huge!

The system is hosted in a private data center – with nginx + passenger setup. It runs on Rails 2.3.11 + MySQL and uses Thinking Sphinx for search indexing. It has been running fine for the past 3 years and over the last few months the client has scaled number of users considerably but nothing that we found alarming.

Lets talk numbers! I am using New Relic terminology here and if you are not familiar with it I do suggest you read and use it. New Relic RPM rocks! We earlier used to have about 25 rpm (requests per minute) which recently scaled to about 45 rpm. Not much increase when it comes to scale in terms of number of users.

However, to our surprise, we started getting a lot of complaints about the system being slow – typically slowing down drastically towards evening. The possible culprits:

- Code. There was minimal code and non-impacting changes since a few weeks before the complaints , so we were pretty sure there was no code issue.

- Infrastructure. Was a possibility, but the server hardware statistics checked out fine. Network card monitoring showed no packet drops.

- Network routing. Routers were changed but the problem persisted.

- Disk IO / swap space. No disk space issues.

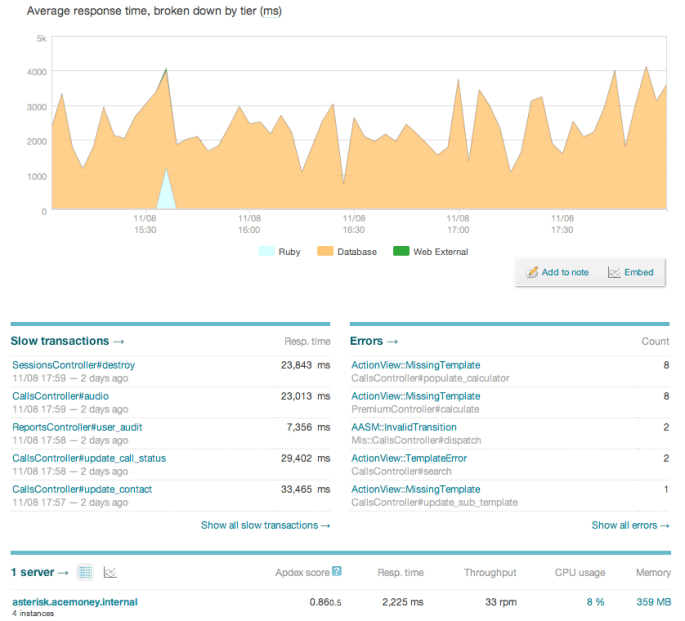

So, we monitored the server using top and found that MySQL was taking up memory in spikes (sometime even more than 200%). To verify our hypothesis, we installed the latest version of New Relic RPM gem and upgraded to New Relic Pro version and went through the details. This is the image we saw:

There are a few things worth noticing. Firstly, there is one particular query (update_call_status) which is taking obscenely long (we saw web requests ranging from 17 seconds – 35 seconds). Poor users!

The orange portion was time spent in database. So, we set about looking into improving the database resources. After much research on improving an InnoDB MySQL performance, we added the following lines in the /etc/my.cnf

innodb_buffer_pool_size = 1600M # earlier value was 800M innodb_flush_log_at_txn_commit = 0

Increasing the pool size allowed MySQL to improve its cache and the flush the commit log on transaction improved speed. Typically innodb_buffer_pool_size recommended value is about 60% of total memory. 0 second commit transaction flush is immediate but if you set it to 1 or more, its not guaranteed to flush the commit log exactly after that interval.

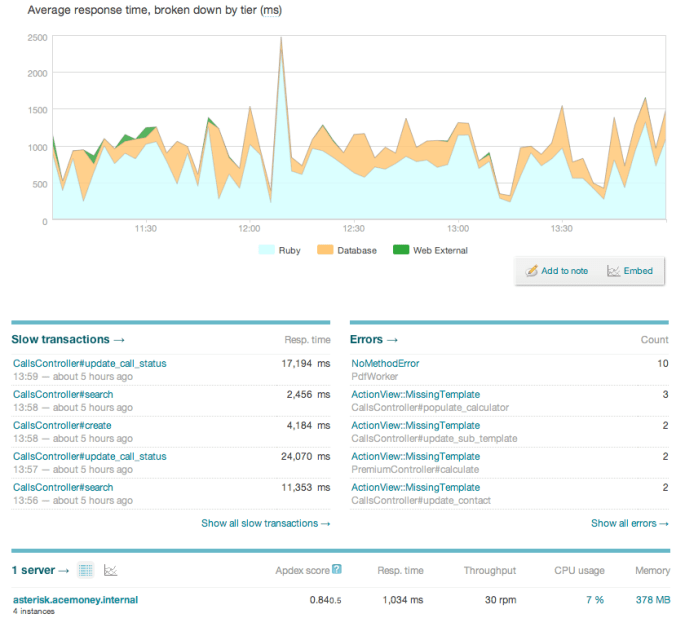

This was the next picture we got in RPM:

Notice that the orange has reduced but the blue (Ruby processing) has increased. We also noticed that the web request time has not reduced. It remains same as earlier. So, though we have indeed improved the resources of MySQL and lowered database time, its has not solved the problem!

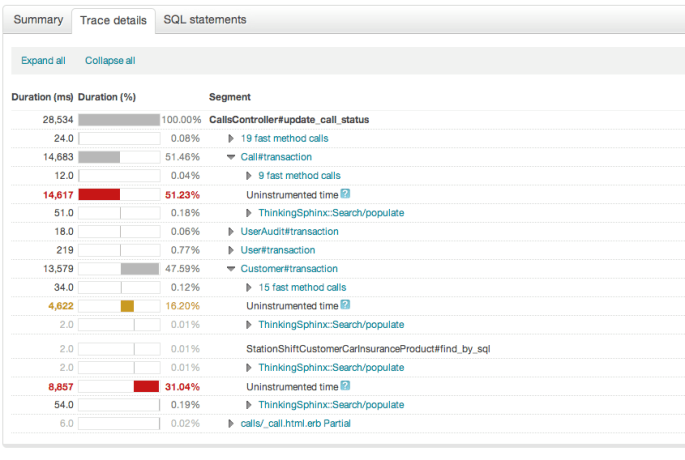

Next we analyzed the Call Trace and found this:

Hang on — Uninstrumented time – wtf! RPM says its the time spent where we cannot analyze where the control went. We add tracer_method to thinking sphinx methods (ThinkingSphinx::Search showed up) but we still got uninstrumented time. Now what more tracer methods could be add? !! Having limited options here, we dug into various gem code.

On expansion of the trace, the call log pointed to ‘after_commit’ gem for db_update_transaction. Hmm.. into the thinking sphinx scope now. We added some log statements into after_commit gem but it did not show any delay. So, we thought back and reviewed how we are using Thinking Sphinx here.

Delta Indexes! Could they be causing trouble? We added a log statement here to see if delta indexing is causing trouble:

# File: thinking-sphinx-2.0.1/lib/thinking_sphinx/active_record/delta.rb

def index_delta

Rails.logger.info("+++ #{Time.now.to_i}"

delta_object.index(self, instance)

Rails.logger.info("+++ (exit) #{Time.now.to_i}"

VOILA! We nailed it – the time difference between these 2 log statements was over 10 seconds. But what does that explain and how do we solve it?

RTFM

We read the configuration about how we can manage delta indexed. There are 3 ways to do this:

- Default: Spawn the indexer every time a delta is issued (UPDATE / INSERT) queries.

- DateTimeDelta: Do not spawn the indexer immediately but at most after a threshold

- DelayedDelta: Use delayed job for spawning the indexer

Now, in every request to the culprit – ‘update_call_status’, there are about 5 UPDATE and 3 INSERT calls. Using the default indexing strategy, that triggers the indexer about 8 times per second. Considering about 40 rpm for update_call_status, it means 320 calls to indexer per minute. Considering that this was a system running for about 8 hours a day…. oh I lost count, do the math!

Considering the symptoms “server works fine in the morning and slows down crazily by evening”, its starting to make sense. We had a index rebuild task around mid-night, so it would rebuild the TS index.

We reconfigured our delta indexes with datetime deltas with a threshold of 1 hour.

class Call < ActiveRecord::Base

define_index do

...

set_property :delta => :datetime, :threshold => 1.hour

...

end

...

end

Since we need the rake tasks, add the ts-datetime-delta gem to the Gemfile.

#Gemfile gem 'ts-datetime-delta', :require => 'thinking_sphinx/deltas/datetime_delta'

Add a cron job to the frequency of the threshold value you have set:

0 * * * * (cd <path to your Rails.root>; rake ts:in:delta)

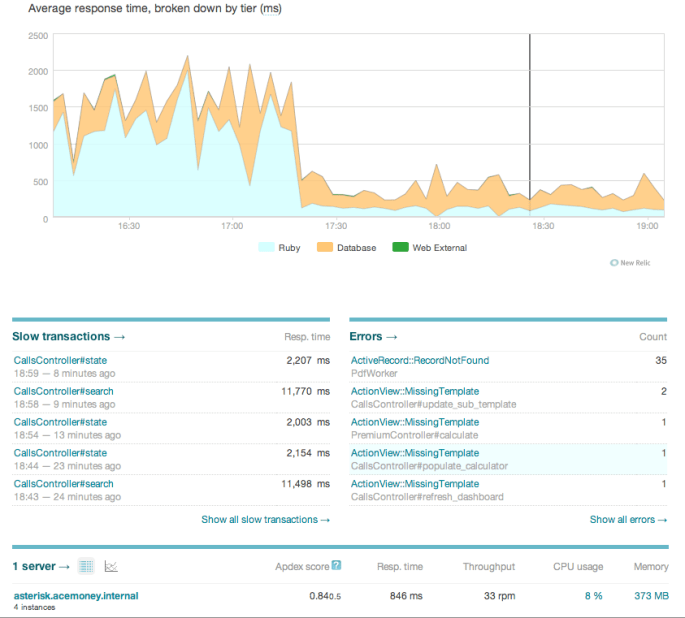

When we restarted our app server, we saw a totally different and pleasant sight!

We brought down the server response time to under 500ms and average web response times to under 1.5 seconds.

Problem resolved! Woot!